The goal is to create a model that predicts the. For example, if we have a bucket of 5 different fruits. Decision Trees (DTs) are a non-parametric supervised learning method used for classification and regression. Information gain: There’s a decrease in the entropy. Here everything is mixed and hence it’s entropy is very high. Entropy: It’s the measure of unpredictability in the dataset.

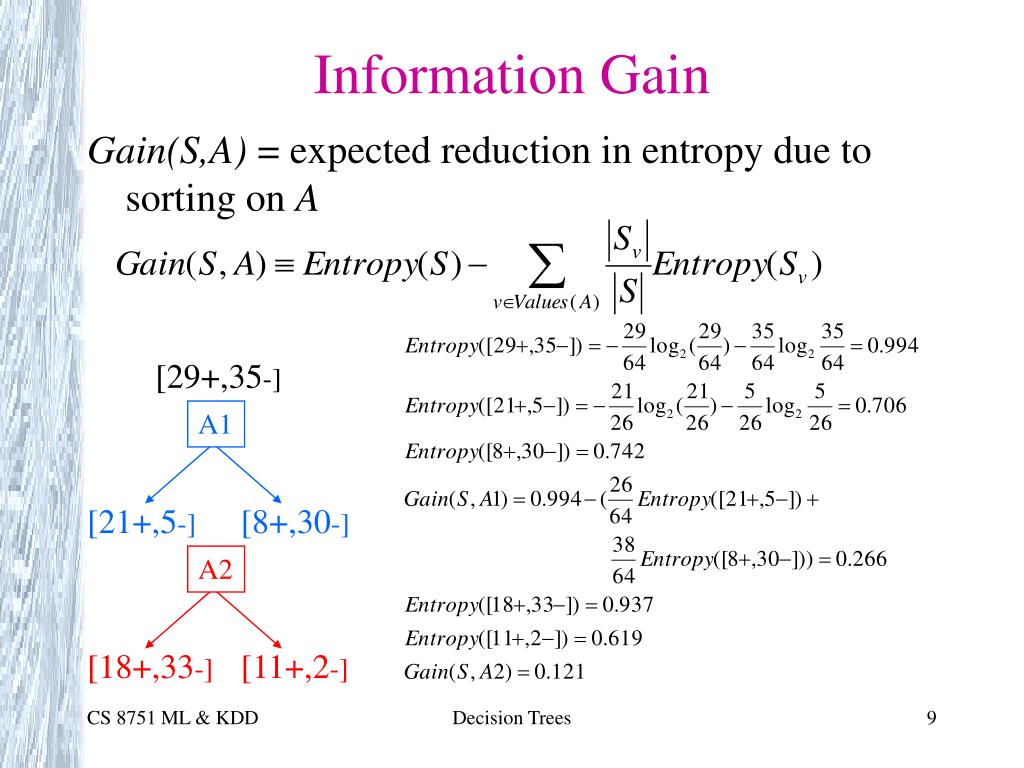

In practice, however, it is enough if the split leads to subsets with a total lower entropy than the original dataset. Ideally, the split should lead to subsets with an entropy of 0.0. For 3200 classes, a very high recognition rate of 99.88 percent was achieved at a high speed of 873 samples/s when the experiment was conducted on a Cyber 172 computer using a high-level language. Important terminology to be used in the Decision Tree. The goal of each split in a decision tree is to move from a confused dataset to two (or more) purer subsets. The Elements of Statistical Learning (Hastie, Tibshirani, Friedman) without even mentioning entropy in the context of classification trees. Overview of Decision Trees A tree structured model for classification, regression and probability estimation. Because the IG is the change in Entropy (of the Original Node) BEFORE and AFTER splitting. Cross Entropy vs Entropy (Decision Tree) Several papers/books I have read say that cross-entropy is used when looking for the best split in a classification tree, e.g. When applied to classify sets of 64, 450, and 3200 Chinese characters, respectively, the experimental results support the theoretical predictions. Thus, you can see that the purpose of getting the Entropy is to help us get the IG. To solve these problems, several theorems related to the bounds on the search time, error rate, memory requirement and overlap factor in the design of a decision tree have been proposed and some principles have been established to analyze the behaviors of the decision tree. However, the memory requirement is in the order 0(H exp(H)) which poses serious problems in the implementation of a tree classifier for a large number of classes. In this formalism, a classification or regression decision tree is used as a predictive model to draw conclusions about a set of observations. The theoretical results indicate that the tree searching time can be minimized to the order O(H), but the error rate is also in the same order O(H) due to error accumulation. Decision tree learning is a supervised learning approach used in statistics, data mining and machine learning. Decision Tree: Another Example Deciding whether to play or not to play Tennis on a Saturday A binary classi cation problem (play vs no-play) Each input (a Saturday) has 4 features: Outlook, Temp. Because it means that there’s a lot of impurity which can be split further down the tree 2. Suppose H is Shannon's entropy measure of the given problem. Thus, the Feature with the HIGHEST IG will be the TOP Decision Node. When the number of pattern classes is very large, the theorems can reveal both the advantages of a tree classifier and the main difficulties in its implementation. Several theorems have been proposed and proved. Based on a recursive process of reducing the entropy, the general decision tree classifier with overlap has been analyzed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed